A/B testing is a powerful tool for optimizing your marketing efforts. It helps you make data-driven decisions.

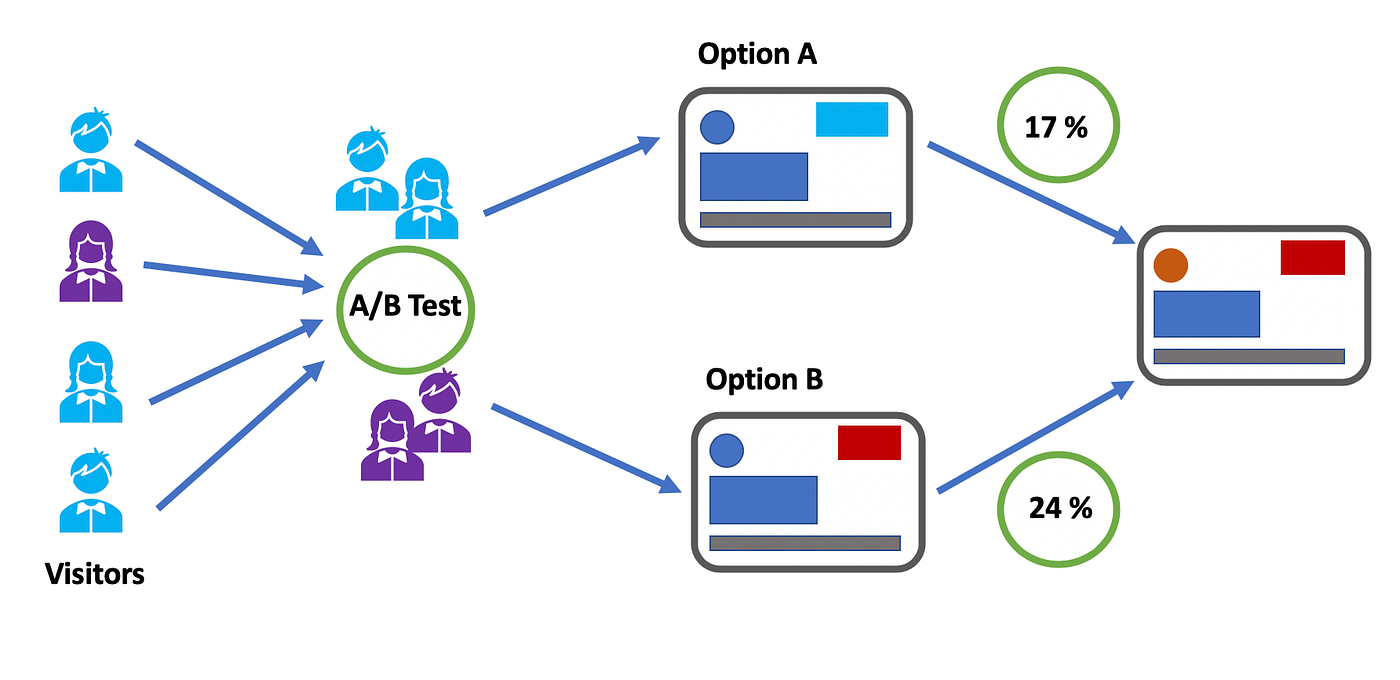

In this blog post, we will explore various A/B testing strategies that can elevate your campaigns. A/B testing involves comparing two versions of a web page, email, or ad to see which performs better. This technique allows you to test different elements and find out what resonates best with your audience.

By understanding the impact of small changes, you can improve conversion rates and overall performance. Whether you are tweaking headlines, images, or call-to-action buttons, A/B testing provides valuable insights. Dive in to discover effective A/B testing strategies and how they can help you refine your marketing tactics.

Introduction To A/b Testing

A/B Testing is a powerful tool for understanding user preferences. It helps businesses make data-driven decisions. This strategy involves comparing two versions of a webpage or app. One is the control, and the other is the variation. By doing this, companies can see which version performs better.

What Is A/b Testing?

A/B Testing is a method used to compare two versions of a webpage or app. Version A is the control, and version B is the variation. Users are split into groups and shown different versions. The goal is to see which version performs better.

| Aspect | Version A (Control) | Version B (Variation) |

|---|---|---|

| Headline | Original Headline | New Headline |

| Button Color | Blue | Green |

| Call to Action | Sign Up | Join Now |

Importance Of A/b Testing

A/B Testing is crucial for several reasons. First, it helps improve user experience. By testing different versions, you can find what works best for your audience. Second, it increases conversion rates. A better user experience often leads to more sign-ups or sales.

- Improves user experience

- Increases conversion rates

- Reduces bounce rates

In summary, A/B Testing is a valuable tool. It helps businesses make informed decisions. This leads to better user experience and higher conversion rates.

Credit: towardsdatascience.com

Setting Clear Goals

Setting clear goals is crucial for successful A/B testing. It ensures you measure the right metrics and make data-driven decisions. Without clear goals, your tests may not yield meaningful results.

Defining Success Metrics

To set clear goals, start by defining success metrics. These metrics help you track progress and measure the impact of your changes.

Consider what you want to achieve with your A/B test. Do you want to increase conversions, improve user engagement, or reduce bounce rates? These questions will guide you in selecting relevant metrics.

Here’s a table to help you identify common success metrics:

| Goal | Success Metric |

|---|---|

| Increase Conversions | Conversion Rate |

| Improve User Engagement | Average Session Duration |

| Reduce Bounce Rate | Bounce Rate |

Establishing Hypotheses

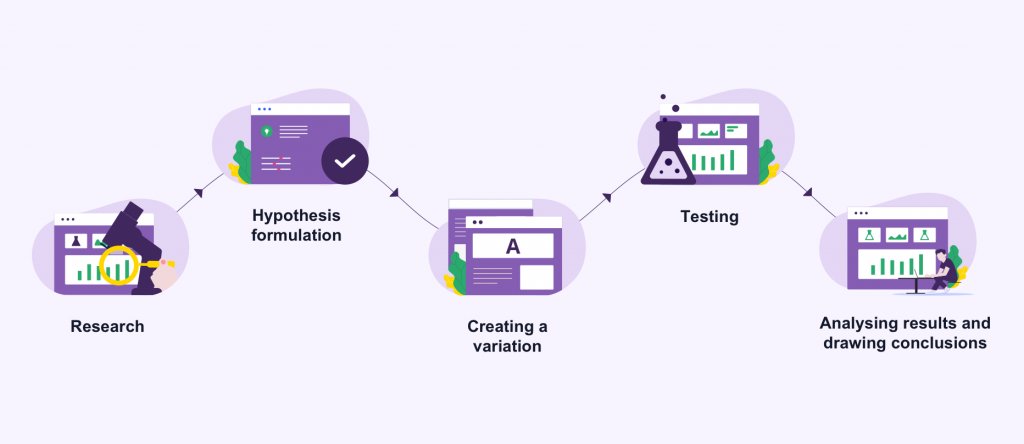

Once you have defined your success metrics, focus on establishing hypotheses. A hypothesis is a statement you can test. It should be clear and testable.

Follow these steps to create a strong hypothesis:

- Identify the problem or area for improvement.

- Propose a change that might solve the problem.

- Predict the outcome of the change.

For example:

- Problem: Low conversion rate on the checkout page.

- Change: Simplify the checkout form.

- Prediction: Simplifying the form will increase the conversion rate.

By establishing clear hypotheses, you can focus your tests and gather meaningful data.

Choosing The Right Elements To Test

Choosing the right elements to test in an A/B testing strategy is crucial. It can significantly impact your conversion rates and user experience. Prioritizing the elements that have the most influence on user behavior is key. Here, we will discuss three important elements to test: headlines, CTAs, and layouts and designs.

Testing Headlines

Headlines are often the first thing users see. They can make or break your content’s effectiveness. A compelling headline can grab attention and improve engagement.

Consider testing different headline variations:

- Short vs. long headlines

- Question vs. statement

- Incorporating numbers or stats

- Using power words

Track metrics such as click-through rates (CTR) and bounce rates to determine the most effective headline.

Testing Ctas

Call-to-actions (CTAs) guide users towards desired actions. Testing different CTAs can significantly boost conversions.

Focus on these aspects:

- Button color

- Button text

- Button size

- Placement on the page

Monitor conversion rates to identify the most effective CTA elements.

Testing Layouts And Designs

Layouts and designs influence user experience and engagement. A well-designed layout can lead to higher user satisfaction and conversions.

Consider testing:

- Page structure

- Navigation menu

- Image placements

- Font styles and sizes

Evaluate metrics like time on site and conversion rates to determine the best design elements.

| Element | Aspects to Test | Metrics to Track |

|---|---|---|

| Headlines | Length, format, numbers, power words | CTR, bounce rates |

| CTAs | Color, text, size, placement | Conversion rates |

| Layouts and Designs | Structure, navigation, images, fonts | Time on site, conversion rates |

Creating Variations

Creating variations is a key step in A/B testing. This process involves making different versions of your webpage or app elements. Each version is tested to see which performs better. This helps you understand what changes can improve user experience and conversions.

Developing Test Variants

When developing test variants, it’s important to change only one element at a time. This ensures that you can clearly identify what caused any differences in performance. Here are some common elements to test:

- Headlines: Try different headlines to see which grabs attention.

- Call-to-Action (CTA) buttons: Test different colors, sizes, and texts.

- Images: Use different images to see which one resonates more.

- Layout: Change the layout to see if it affects user engagement.

Using these elements, create multiple versions (A, B, C, etc.). Ensure each version has a unique feature that differentiates it from the others. This method helps pinpoint what works best.

Ensuring Consistency

Ensuring consistency across test variants is crucial. Each variant should provide a uniform experience. This means maintaining the same branding, tone, and style. Consistency helps in obtaining accurate test results.

Here are some tips to ensure consistency:

- Branding: Keep your logo, colors, and fonts consistent.

- Content: Ensure the message and tone remain the same.

- Functionality: All variants should work smoothly without bugs.

Maintaining these aspects ensures that any change in user behavior is due to the tested element, not other factors.

Creating variations and ensuring consistency helps you make informed decisions. This approach improves your website or app performance effectively.

Segmenting Your Audience

A/B testing allows you to compare two versions of a webpage or app. This helps determine which performs better. To get accurate results, you must segment your audience. This involves dividing your audience into smaller groups. Each group should share similar characteristics. This step is crucial for obtaining meaningful insights.

Identifying Key Segments

Start by identifying key segments of your audience. Look at factors like:

- Demographics: Age, gender, income level.

- Geographic Location: Country, city, region.

- Behavioral Patterns: Purchase history, browsing habits.

- Technology Use: Device type, browser, operating system.

Use these segments to tailor your tests. For example, test one version of a landing page with younger users. Use another version with older users. This helps you understand which version works best for each group.

Personalizing Test Experiences

Once you have identified key segments, personalize the test experiences. This means creating different versions of your test based on the segments.

Here’s how to do it:

- Create multiple versions of the page or app.

- Assign each version to a specific segment.

- Analyze the results for each segment separately.

For example, if one segment prefers videos, show them a version with more videos. If another segment responds better to text, show them a version with more written content.

Personalizing test experiences can lead to better engagement. It ensures that each user gets the most relevant version.

Credit: vwo.com

Running The Test

Running the Test is a crucial stage in the A/B testing process. This is where all your planning and preparation come into action. In this phase, you will execute your test and gather data to make informed decisions. Let’s explore the steps involved in running a test.

Implementing The Test

The first step is implementing the test. Start by creating two versions of your webpage or product feature. Label them as A and B. Version A is the control, and version B is the variant. Use A/B testing tools to split your audience into two groups. Ensure each group sees only one version.

Here is a simple table to illustrate the process:

| Group | Version | Percentage of Audience |

|---|---|---|

| Group 1 | Version A (Control) | 50% |

| Group 2 | Version B (Variant) | 50% |

Ensure the changes are minor but impactful. This could be a different headline, call-to-action, or image. Avoid making too many changes at once. This ensures clear results.

Monitoring Progress

Once the test is live, monitoring progress is essential. Use analytics tools to track user interactions. Focus on key metrics like conversion rates, click-through rates, and bounce rates. This data will help you understand user behavior.

Here are some steps to monitor progress effectively:

- Check the performance of each version daily.

- Look for significant differences in user behavior.

- Ensure the sample size is large enough for accurate results.

Keep an eye on external factors like holidays or promotions. These can affect your results. Run the test for a sufficient period to gather reliable data. Usually, two weeks to a month is ideal.

By following these steps, you can ensure your A/B test runs smoothly. This will lead to valuable insights and better decision-making.

Analyzing Results

A/B testing is a powerful tool for understanding what works best for your audience. But the real value comes from analyzing the results. This process helps determine which version performed better and why. Proper analysis ensures your decisions are backed by data, not assumptions.

Interpreting Data

Interpreting data is crucial in A/B testing. Look beyond the surface-level numbers. Consider metrics like conversion rates, click-through rates, and bounce rates.

Use tables to organize your data for easy comparison. Here is an example:

| Metric | Version A | Version B |

|---|---|---|

| Conversion Rate | 3.5% | 4.2% |

| Click-Through Rate | 8.0% | 9.1% |

| Bounce Rate | 50% | 45% |

From this table, you can see Version B has a higher conversion rate and click-through rate, and a lower bounce rate. This suggests Version B is more effective.

Statistical Significance

Statistical significance is key in A/B testing. It helps ensure that your results are not due to chance. Use a significance calculator to check if the difference between versions is meaningful.

Here are steps to determine statistical significance:

- Set a confidence level, often 95%.

- Calculate the p-value of your data.

- If the p-value is below 0.05, the result is significant.

Statistical significance adds credibility to your findings. It means you can trust the data to make informed decisions.

Credit: www.youtube.com

Optimizing Based On Insights

Optimizing based on insights is the essence of successful A/B testing. Once you have collected data from your tests, the next step is to turn that data into actionable insights. This process involves analyzing the results to understand what worked and what didn’t. By focusing on optimization, you can continuously improve your website’s performance.

Applying Findings

After gathering data, it’s crucial to apply the findings. Start by identifying the winning variant. Look for patterns that show why one version performed better than the other. This step involves deep analysis and understanding user behavior.

- Identify key metrics: conversions, bounce rates, and time on page.

- Compare these metrics between the control and the variant.

- Look for significant differences that indicate success.

Once you’ve identified the successful elements, implement these changes on your website. This could mean updating headlines, altering call-to-action buttons, or redesigning page layouts. The goal is to replicate the success of the winning variant across your site.

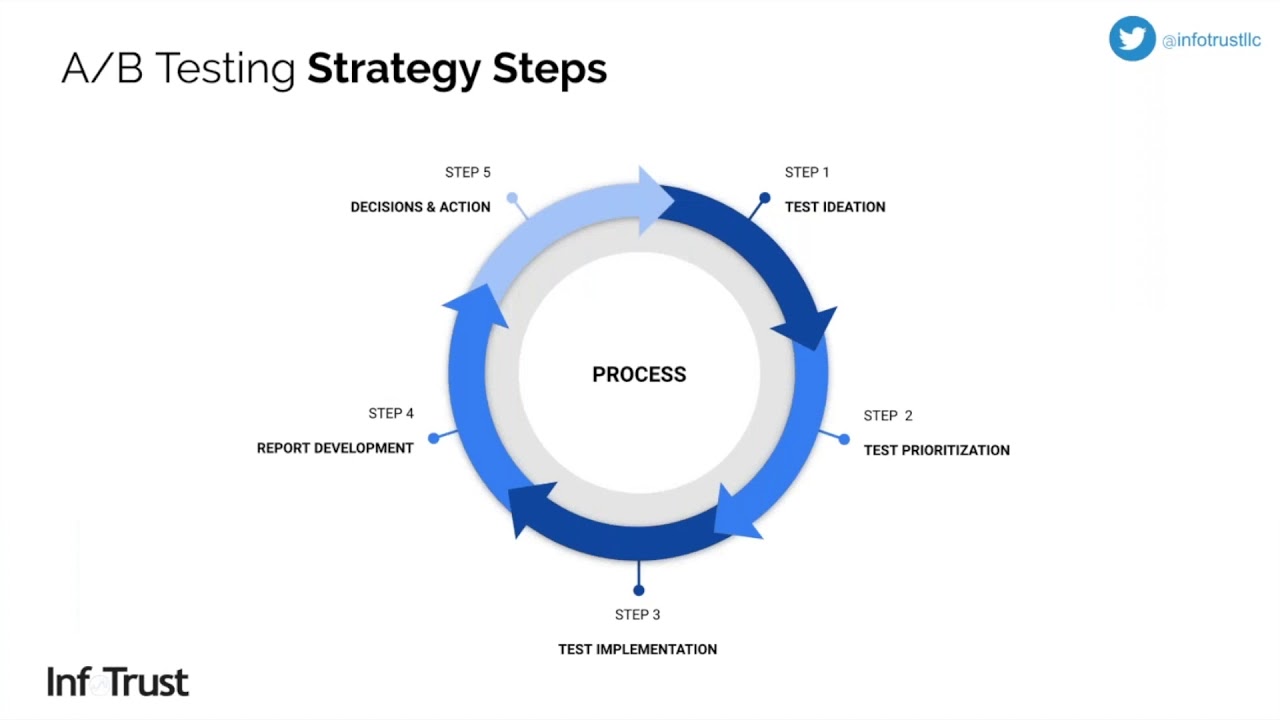

Continuous Improvement

A/B testing is not a one-time task. It’s an ongoing process. Continuous improvement ensures your website stays optimized over time.

- Run new tests regularly.

- Analyze the results and apply new findings.

- Monitor performance to ensure changes have a positive impact.

By maintaining a cycle of testing and optimization, you can adapt to changing user preferences. This helps keep your website relevant and user-friendly.

Here’s a simple table to summarize the steps:

| Step | Action |

|---|---|

| Identify Metrics | Conversions, bounce rates, time on page |

| Analyze Data | Compare control and variant metrics |

| Implement Changes | Update website based on findings |

| Run New Tests | Regularly test new hypotheses |

| Monitor Performance | Ensure changes improve metrics |

This cycle of analysis, implementation, and testing is key to continuous improvement. By staying committed to this process, your website can achieve better performance and user satisfaction.

Case Studies Of Successful A/b Tests

A/B testing can be a powerful tool to optimize and improve your website’s performance. By comparing two versions of a webpage or app, businesses can determine which one performs better. This section covers some successful A/B test case studies. We will look at examples from both e-commerce and B2B industries.

E-commerce Success Stories

E-commerce businesses often use A/B testing to boost sales and improve user experience. Here are some notable examples:

| Company | Tested Element | Result |

|---|---|---|

| Amazon | Call-to-Action Button | 20% increase in clicks |

| eBay | Product Descriptions | 15% boost in conversions |

Amazon tested different colors for their call-to-action button. They found that a brighter color increased clicks by 20%. This simple change made a significant impact on their sales.

eBay experimented with the length and style of their product descriptions. They discovered that concise, bullet-point descriptions led to a 15% increase in conversions. This finding helped them improve their overall customer engagement.

B2b Success Stories

B2B companies also benefit from A/B testing. Here are some examples:

- HubSpot tested different landing page designs. They saw a 30% increase in lead generation by using a cleaner layout.

- Salesforce experimented with email subject lines. They achieved a 25% higher open rate by using personalized subject lines.

HubSpot found that a simpler, cleaner landing page design led to a 30% increase in lead generation. This result encouraged them to redesign other pages similarly.

Salesforce tested various email subject lines. They discovered that personalized subject lines resulted in a 25% higher open rate. This insight helped them create more effective email campaigns.

Common Pitfalls To Avoid

When conducting A/B testing, avoiding common pitfalls is crucial for obtaining accurate results. These mistakes can lead to incorrect conclusions and wasted resources. Below, we explore some common pitfalls to avoid.

Sample Size Errors

One frequent mistake is not having a large enough sample size. Small sample sizes can lead to inaccurate results and misleading conclusions.

- Wait until you have a sufficient number of users.

- Use sample size calculators to determine the correct size.

- Avoid stopping tests too early.

Ensuring a correct sample size helps in making data-driven decisions.

Bias In Testing

Bias can severely affect the outcome of A/B tests. Uncontrolled variables can introduce bias.

| Type of Bias | Example | Solution |

|---|---|---|

| Selection Bias | Choosing users who are likely to favor one variant. | Randomly assign users to different variants. |

| Measurement Bias | Using flawed metrics to evaluate performance. | Ensure metrics are accurate and reliable. |

Eliminating bias ensures that your test results are valid and trustworthy.

Future Trends In A/b Testing

The future of A/B testing is evolving rapidly. New technologies are enhancing how we test and optimize user experiences. Let’s explore some of the most promising trends.

Ai And Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) are changing A/B testing. They can analyze vast amounts of data quickly. This speed helps in identifying winning variations faster. AI-driven tools can also predict outcomes. This reduces the need for long testing periods.

Here are some ways AI and ML are influencing A/B testing:

- Automated Testing: AI can run multiple tests simultaneously.

- Personalization: ML algorithms can tailor user experiences in real-time.

- Optimized Decision-Making: AI helps in making data-driven decisions quickly.

Predictive Analytics

Predictive analytics is another exciting trend in A/B testing. It uses historical data to forecast future outcomes. This helps in understanding the potential impact of changes before implementation.

Benefits of predictive analytics in A/B testing include:

| Benefit | Description |

|---|---|

| Better Forecasting | Predict outcomes based on past data. |

| Risk Reduction | Identify potential issues before they occur. |

| Improved ROI | Focus on strategies that are more likely to succeed. |

These trends are transforming A/B testing. Businesses can now make smarter, faster decisions. This leads to better user experiences and higher conversions.

Frequently Asked Questions

What Is A/b Testing?

A/B testing is a method of comparing two versions of a webpage or app to determine which one performs better.

Why Is A/b Testing Important?

A/B testing is crucial for optimizing user experience and improving conversion rates by making data-driven decisions.

How Does A/b Testing Work?

A/B testing involves splitting traffic between two versions of a webpage and measuring performance to identify the better version.

What Are Common A/b Testing Strategies?

Common A/B testing strategies include changing headlines, images, call-to-action buttons, and layout to see what resonates best with users.

Conclusion

A/B testing helps improve your website’s performance. It provides clear data for decisions. Start with small tests. Analyze the results carefully. Make changes based on what works best. Track and repeat the process. This practice ensures your website stays effective.

Remember, consistency is key. Regular testing leads to better outcomes. Always aim to understand your audience. Their preferences drive your success. Happy testing!