A/B testing, also called split testing, is essential for optimizing digital strategies. It helps businesses make data-driven decisions.

But what are the best practices to follow? Understanding A/B testing can greatly improve your marketing efforts. By comparing two versions of a web page or app, you can see which one performs better. This process provides valuable insights into what resonates with your audience.

Yet, running effective A/B tests requires more than just splitting your audience. You need a clear strategy and adherence to best practices. This ensures accurate results and actionable insights. In this blog post, we will explore the top practices for A/B testing. By following these guidelines, you can maximize the effectiveness of your tests and make informed decisions. Let’s dive in!

Introduction To A/b Testing

A/B testing is a powerful tool in digital marketing. It helps businesses understand what works best for their audience. By comparing two versions of a webpage or app, you can see which one performs better. This method provides data-driven decisions to improve user experience and increase conversions.

What Is A/b Testing?

A/B testing, also known as split testing, involves comparing two versions of a webpage or app to determine which one performs better. It is a method of testing where two or more variants are shown to users at random. The goal is to identify which version drives more conversions or engagement.

Here’s how it works:

- Create two versions of a webpage (Version A and Version B).

- Randomly split your audience into two groups.

- Show Version A to one group and Version B to the other group.

- Analyze which version achieves your desired outcome better.

Importance Of A/b Testing

A/B testing is crucial for several reasons:

- Improves User Experience: By testing different versions, you can find out what your users prefer. This helps create a more user-friendly experience.

- Increases Conversion Rates: A/B testing helps identify the most effective elements. This leads to higher conversion rates.

- Data-Driven Decisions: This method relies on data rather than guesswork. It ensures that changes are made based on actual user behavior.

Consider these benefits in a more detailed way:

| Benefit | Description |

|---|---|

| Improves User Experience | Find out what users like and make changes accordingly. |

| Increases Conversion Rates | Identify the elements that drive more conversions. |

| Data-Driven Decisions | Make changes based on user behavior, not assumptions. |

Implementing A/B testing is a step towards a more effective marketing strategy. It allows for continuous improvement based on real data.

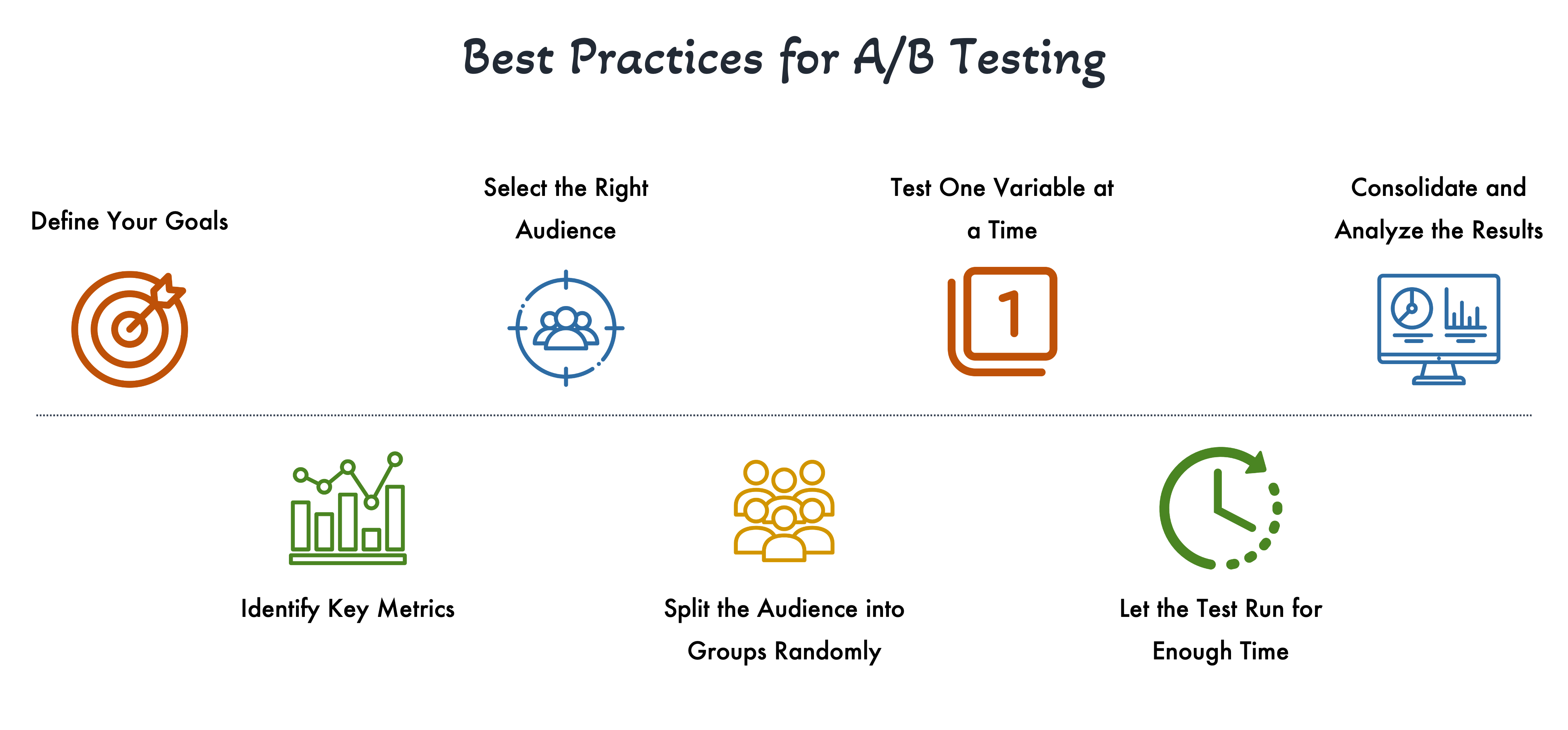

Setting Clear Objectives

Setting clear objectives is crucial for the success of your A/B testing. Without clear objectives, you might end up with results that are difficult to interpret or that do not align with your overall business goals. By defining specific goals and identifying key metrics, you ensure that your A/B tests are focused and meaningful. This section will guide you through the best practices for setting clear objectives.

Defining Goals

Before starting your A/B test, clearly define what you aim to achieve. This could be increasing the click-through rate (CTR), boosting conversions, or improving user engagement. Start with a specific goal that aligns with your overall business objectives. For example:

- Increase sign-ups by 20% in the next month

- Reduce bounce rate on the landing page by 15%

- Improve average order value by $10

These goals provide a clear direction for your test and help in measuring success.

Identifying Key Metrics

Once you have defined your goals, identify the key metrics that will help you measure progress. Key metrics are the quantifiable indicators of your objectives. For example:

| Objective | Key Metrics |

|---|---|

| Increase sign-ups | Sign-up rate, Conversion rate |

| Reduce bounce rate | Bounce rate, Time on page |

| Improve average order value | Average order value, Total revenue |

Tracking these metrics will help you determine whether the changes you made are effective. Use analytics tools to monitor these metrics throughout your test.

By setting clear objectives and identifying key metrics, you create a roadmap for your A/B testing. This ensures that your tests are purposeful and aligned with your business goals.

Choosing The Right Variables

Choosing the right variables is crucial for the success of your A/B testing. It helps in understanding which changes impact user behavior. Making informed decisions about which elements to test can save time and resources. Let’s dive into some best practices.

Selecting Elements To Test

Focus on elements that have a significant impact. These could be headlines, call-to-action buttons, or images. Start with high-impact areas. Testing less impactful elements may not yield meaningful results.

Create a list of potential elements to test. Prioritize them based on their importance. For example:

- Headlines

- Call-to-Action Buttons

- Images

- Product Descriptions

Prioritizing Changes

Not all changes have the same level of importance. Some are more critical than others. To prioritize changes, consider the following factors:

| Factor | Description |

|---|---|

| Impact | How much the change can affect user behavior |

| Effort | The resources needed to implement the change |

| Testing Time | How long it will take to get meaningful data |

Focus on high-impact, low-effort changes first. These are quick wins. They can provide immediate insights. This helps in making timely decisions. Use this approach to streamline your testing process.

By following these best practices, you can optimize your A/B testing efforts. It ensures you are testing the most relevant variables. This leads to actionable insights and improved user experience.

Creating Hypotheses

Creating strong hypotheses is a critical step in A/B testing. A well-formed hypothesis gives direction and purpose to your test. It helps you understand the cause and effect relationships in your experiments. Below, we will discuss the best practices for formulating hypotheses and establishing test scenarios.

Formulating Hypotheses

A good hypothesis should be clear and specific. It should also be based on data and observations. Start by identifying a problem or opportunity in your current setup. For example, you might notice a high bounce rate on your landing page. This observation can guide you to a hypothesis.

Here are some steps to formulate a hypothesis:

- Identify the problem: Use data to pinpoint issues. For instance, low conversion rates.

- Analyze the cause: Look at user behavior and feedback. Understand why the issue exists.

- Formulate a hypothesis: Create a testable statement. Example: “Changing the CTA button color to red will increase clicks.”

Establishing Test Scenarios

After you have a hypothesis, establish your test scenarios. These scenarios should clearly define what you are testing. They should also outline how you will measure success.

Here’s a simple table to help you create test scenarios:

| Test Scenario | Hypothesis | Metrics to Measure |

|---|---|---|

| CTA Button Color | Changing color will increase clicks | Click-through rate |

| Headline Text | New headline will reduce bounce rate | Bounce rate |

Make sure each scenario includes a control and a variable. The control is your current setup, while the variable is the change you are testing. This will help you compare results accurately.

Clear test scenarios ensure you understand the impact of changes. They make it easier to analyze and interpret your data.

Designing Effective Tests

Designing effective A/B tests is crucial for gaining valid insights. It involves careful planning and execution. This ensures reliable and actionable results. Below, we explore key aspects of this process.

Crafting Test Variations

Creating variations is the first step. Variations should be simple and focused. Test one element at a time to avoid confusion.

- Change button color

- Modify headlines

- Adjust layout

Each variation must be distinct. This helps in understanding the impact of specific changes.

Ensuring Consistency

Consistency is vital in A/B testing. Ensure all elements, except the variable, remain the same. This includes:

- Target audience

- Time of testing

- Traffic sources

Maintaining consistency minimizes external influences. It ensures the test results are accurate and trustworthy.

| Element | Test Variation | Consistency Requirement |

|---|---|---|

| Button Color | Red vs Blue | Same audience, time, traffic |

| Headline | Short vs Long | Same audience, time, traffic |

Credit: www.e-shot.net

Implementing The Tests

Implementing A/B tests is crucial for optimizing website performance. This section will guide you through the best practices for launching and monitoring your tests. By following these steps, you can ensure accurate results and make informed decisions to improve user experience.

Launching A/b Tests

Launching your A/B tests correctly is essential to gather reliable data. Follow these steps to ensure a smooth start:

- Define Clear Goals: Identify what you want to achieve with the test. Examples include increasing click-through rates or reducing bounce rates.

- Segment Your Audience: Divide your audience into equal groups to ensure fair comparison. Use a random assignment to avoid bias.

- Create Variations: Develop different versions of the element you are testing. Ensure only one variable changes at a time.

- Use Reliable Tools: Choose a trusted A/B testing tool like Google Optimize or Optimizely. These tools provide accurate data and easy integration.

- Set a Time Frame: Determine how long the test will run. Ensure the duration is enough to gather significant data.

Monitoring Performance

Monitoring your A/B tests is vital to ensure accuracy and effectiveness. Keep a close eye on these aspects:

- Real-Time Tracking: Use your A/B testing tool to monitor results in real-time. This helps you spot issues early.

- Analyze Metrics: Focus on key metrics like conversion rates, bounce rates, and user engagement. Compare these metrics across different variations.

- Check for Statistical Significance: Ensure your results are statistically significant before making decisions. This means the observed effect is likely not due to chance.

- Document Findings: Keep detailed records of your observations and insights. This helps in future tests and decision-making.

By following these best practices, you can effectively implement and monitor your A/B tests. This will lead to more accurate insights and better optimization of your website.

Analyzing Test Results

Once you’ve conducted an A/B test, the next step is to analyze the results. This phase is crucial because it helps determine which version performed better. Understanding how to interpret the data and draw meaningful conclusions can guide future decisions and strategies. Let’s explore the best practices for analyzing A/B test results.

Interpreting Data

Interpreting data involves understanding metrics and key performance indicators (KPIs). Focus on metrics like conversion rate, click-through rate (CTR), and bounce rate. These metrics will give insights into user behavior and preferences.

Use tables to compare results from different versions. This makes it easier to see which version performed better.

| Metric | Version A | Version B |

|---|---|---|

| Conversion Rate | 5% | 7% |

| Click-Through Rate (CTR) | 10% | 15% |

| Bounce Rate | 40% | 30% |

Analyze statistical significance to ensure results are not due to chance. Use tools to calculate the p-value or confidence interval.

Drawing Conclusions

After interpreting the data, draw conclusions based on the evidence. Identify which version had a better performance. Consider external factors like seasonality or marketing campaigns that might have influenced results.

Create a summary of findings:

- Version B had a higher conversion rate and CTR.

- Version B had a lower bounce rate.

- Results are statistically significant with a confidence level of 95%.

Document these findings for future reference. Share the insights with your team to inform future strategies.

Credit: www.businesswire.com

Applying Insights

Gathering data from A/B tests is just the beginning. The real value comes from applying these insights effectively. This involves interpreting results, making decisions based on data, and ensuring continuous improvement. Let’s dive into these best practices.

Making Data-driven Decisions

Data-driven decisions are key to successful A/B testing. Begin by analyzing the data thoroughly. Look for patterns and trends. Use statistical significance to validate your findings.

- Identify Key Metrics: Focus on metrics that impact your goals.

- Segment Your Data: Break down data by user demographics or behavior.

- Compare Variants: Examine how different versions perform.

Once you have clear insights, make informed decisions. Implement changes that align with your objectives. Document your decisions and the rationale behind them.

Continuous Improvement

Continuous improvement is essential for long-term success. A/B testing should be an ongoing process. Always look for ways to optimize.

- Review Results: Regularly review test outcomes.

- Implement Changes: Apply successful changes quickly.

- Set New Goals: Establish new objectives based on recent findings.

Track performance after implementing changes. Monitor metrics to ensure improvements are sustainable. Keep iterating to refine your approach.

| Key Action | Purpose |

|---|---|

| Analyze Data | Understand performance and identify trends. |

| Make Decisions | Implement changes based on insights. |

| Review Results | Ensure changes are effective. |

| Set New Goals | Focus on ongoing optimization. |

By following these best practices, you can turn A/B testing insights into actionable strategies. This will enhance your website’s performance and achieve your goals.

Common Pitfalls To Avoid

A/B testing is a powerful tool for improving your website’s performance. But, many fall into common traps that can skew results. Below, we will discuss some common pitfalls to avoid.

Avoiding Bias

Bias can ruin your A/B test results. Ensure your samples are representative. Don’t select users based on their behavior or preferences. This can skew your data.

- Randomize user selection.

- Avoid selecting users based on demographics.

- Ensure each user has an equal chance of being in either group.

Bias can also come from external factors. Avoid testing during holidays or major events. These can affect user behavior in unpredictable ways.

Ensuring Statistical Significance

Statistical significance is crucial for reliable A/B test results. Without it, your results might be due to chance. Ensure your test runs long enough to gather sufficient data.

- Determine the sample size needed before starting the test.

- Use a calculator to estimate the required sample size.

- Ensure the test runs for a sufficient period.

Small sample sizes can lead to incorrect conclusions. Larger sample sizes provide more reliable results. Use tools and calculators to help determine the right sample size.

Remember, patience is key. Don’t stop your test too early. Waiting for the right amount of data ensures your results are valid.

Credit: www.ramotion.com

Tools And Resources

A/B testing is a powerful method to improve your website’s performance. To get the best results, you need the right tools and resources. In this section, we’ll explore some popular A/B testing tools and provide further reading materials to enhance your knowledge.

Popular A/b Testing Tools

There are many tools available for A/B testing. Here are some of the most popular ones:

| Tool | Description | Features |

|---|---|---|

| Google Optimize | A free tool from Google for A/B testing. |

|

| Optimizely | A popular tool with advanced features. |

|

| VWO (Visual Website Optimizer) | A versatile tool for A/B and multivariate testing. |

|

| AB Tasty | A user-friendly tool for A/B testing and personalization. |

|

Further Reading

To deepen your understanding of A/B testing, check out these resources:

- CXL’s A/B Testing Guide – A comprehensive guide covering all aspects of A/B testing.

- Optimizely’s A/B Testing Glossary – A glossary of key terms and concepts in A/B testing.

- VWO’s A/B Testing Resources – Articles, case studies, and guides on A/B testing.

Frequently Asked Questions

What Is A/b Testing?

A/B testing is a method of comparing two versions of a webpage to determine which one performs better. It helps in optimizing content and improving conversion rates.

Why Is A/b Testing Important?

A/B testing is important because it helps identify what works best for your audience. This leads to better user engagement and higher conversion rates.

How To Conduct A/b Testing?

To conduct A/B testing, create two versions of a webpage. Split your audience randomly and measure which version performs better based on specific metrics.

What Are Common A/b Testing Mistakes?

Common A/B testing mistakes include testing too many variables, not having a clear hypothesis, and ending tests too early. Avoid these to get accurate results.

Conclusion

A/B testing helps improve your website’s performance. Stick to one variable per test. Analyze your results carefully. Use the data to make informed decisions. Remember, testing is an ongoing process. Keep testing to find what works best. Stay patient and consistent.

Your efforts will pay off in better user experiences. Happy testing!