A/B testing tools play a vital role in optimizing digital content. They help businesses make data-driven decisions to improve performance.

Understanding the effectiveness of A/B testing tools is crucial for any business. These tools compare two versions of a webpage or app to see which performs better. By testing different elements, companies can determine what resonates most with their audience.

This process leads to better user experiences and increased conversions. With A/B testing, businesses can minimize guesswork and rely on concrete data. The right tool can make a significant difference in marketing strategies. In this blog, we’ll explore how A/B testing tools work, their benefits, and why they are essential for any business looking to thrive in a competitive market.

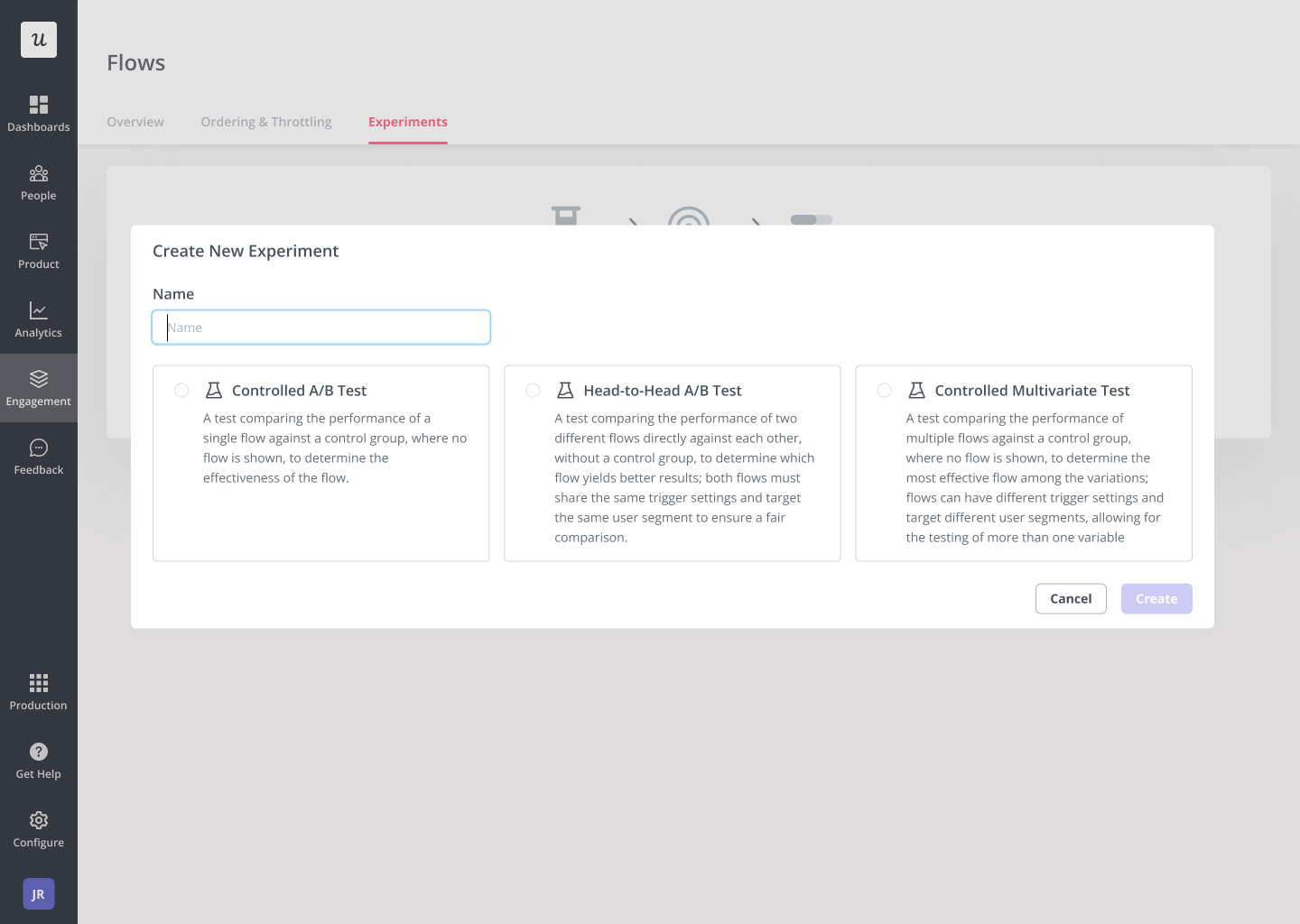

Credit: userpilot.com

Introduction To A/b Testing

A/B testing is a vital process in the world of marketing. It helps businesses understand what works best for their audience. By comparing two versions of a webpage, email, or ad, companies can see which one performs better. This leads to smarter decisions and improved results.

What Is A/b Testing?

A/B testing, also known as split testing, involves comparing two versions of a digital asset. Each version is shown to a different segment of the audience. The goal is to determine which version yields better outcomes. For example, a company may test two different headlines on a landing page. The version that gets more clicks is the winner.

Here’s a simple example:

| Version | Headline | Click-Through Rate (CTR) |

|---|---|---|

| A | Get Your Free E-book Now! | 5% |

| B | Download Your Free Guide Today! | 7% |

In this case, Version B has a higher CTR, indicating it resonates better with the audience.

Importance In Marketing

A/B testing is crucial for optimizing marketing efforts. It allows marketers to make data-driven decisions. This reduces guesswork and enhances the effectiveness of campaigns. Here are some key benefits:

- Improved Conversion Rates: By identifying the best-performing elements, businesses can boost their conversion rates.

- Better User Experience: A/B testing helps in understanding user preferences, leading to a more tailored experience.

- Cost Efficiency: Effective campaigns save money by focusing on what’s proven to work.

- Informed Decision Making: Data from A/B tests provides clear insights, enabling informed decisions.

Marketers can test various elements such as headlines, images, calls-to-action, and layouts. This ensures every aspect of the campaign is optimized for success.

Selecting The Right A/b Testing Tools

Selecting the right A/B testing tools is crucial for optimizing your website’s performance. With many options available, choosing the best tool can be overwhelming. This section will help you make an informed decision. We will cover the criteria for selection and provide an overview of popular tools.

Criteria For Selection

When picking an A/B testing tool, consider these key criteria:

- Ease of Use: The tool should have a user-friendly interface.

- Integration: Ensure it integrates well with your existing systems.

- Cost: Compare the pricing plans to fit your budget.

- Features: Look for features like segmentation, multivariate testing, and reporting.

- Support: Check for the availability of customer support and resources.

Popular Tools Overview

Here is an overview of some popular A/B testing tools:

| Tool | Key Features | Pricing |

|---|---|---|

| Optimizely | Visual editor, multivariate testing, real-time results | Custom pricing |

| VWO | Heatmaps, session recordings, form analytics | Starts at $199/month |

| Google Optimize | Free version, integration with Google Analytics | Free and paid plans |

| AB Tasty | AI-driven insights, audience segmentation, funnel analysis | Custom pricing |

Each tool has its strengths. Evaluate them based on your specific needs. This will help you choose the best one for your A/B testing efforts.

Setting Up A/b Tests

A/B testing is a powerful tool for optimizing website performance. Setting up A/B tests correctly is crucial. Proper setup ensures reliable results. Follow these steps to get started.

Defining Goals

Defining clear goals is the first step. Goals guide the entire testing process. Start by asking, “What do we want to achieve?” Common goals include increasing conversion rates, improving user engagement, or reducing bounce rates.

Create a list of primary and secondary goals. Primary goals are the main focus. Secondary goals support the primary goals. For example, if your primary goal is increasing sign-ups, a secondary goal might be improving page load speed.

| Goal Type | Example |

|---|---|

| Primary | Increase sign-ups |

| Secondary | Improve page load speed |

Creating Variations

After defining goals, create variations. Variations are different versions of the element being tested. They can be as simple as changing a button color or as complex as redesigning a page layout.

Use the following steps to create effective variations:

- Identify the element to change (e.g., button, headline).

- Brainstorm different changes (e.g., color, text, placement).

- Create multiple versions (e.g., Variation A, Variation B).

Test only one change per variation. This ensures clear results. For example, if testing a button color, keep all other elements the same.

Use an A/B testing tool to implement variations. Tools like Google Optimize and Optimizely are popular choices. They provide user-friendly interfaces and detailed analytics.

Remember, the goal is to find the variation that performs best. This helps achieve your primary and secondary goals.

Credit: maestrolearning.com

Running A/b Tests

Running A/B tests is crucial for understanding what works best for your audience. These tests help you compare two versions of a webpage or app to see which performs better. The goal is to optimize your content, design, or functionality for improved user experience and engagement.

Implementation Steps

Implementing A/B tests involves several key steps:

- Define Goals: Determine what you want to achieve. This could be higher click-through rates, better conversion rates, or improved user engagement.

- Select Variables: Choose which elements to test. These might include headlines, images, call-to-action buttons, or page layouts.

- Create Variants: Develop two versions of the element you are testing. The original version is called the control, and the new version is the variation.

- Set Up Tools: Use A/B testing tools like Google Optimize, Optimizely, or VWO to run your tests. These tools will help you track and analyze the performance of each variant.

- Run the Test: Launch the test and ensure it runs for a sufficient amount of time to gather meaningful data. The duration depends on your traffic and the complexity of your test.

Monitoring Performance

Monitoring the performance of your A/B tests is essential for accurate results. Here are some methods to do this effectively:

- Real-Time Analytics: Use real-time analytics to track user behavior and interactions with both variants.

- Conversion Tracking: Set up conversion tracking to measure how well each variant achieves your defined goals.

- Heatmaps: Implement heatmaps to visualize where users click, scroll, and spend the most time on your page.

- Statistical Significance: Ensure your results are statistically significant before making any decisions. This means having enough data to confidently say one variant is better than the other.

Regularly monitor these metrics to make informed decisions and improve your website or app based on real user data.

Analyzing A/b Test Results

Understanding the results of A/B tests is essential for improving your website. Analyzing these results helps you make informed decisions. This section covers how to interpret data and key metrics to track. Let’s dive into the details.

Interpreting Data

Interpreting A/B test data involves looking at various factors. Consider the statistical significance of your results. This determines if the changes are due to chance or are truly effective.

Check the confidence level. A higher confidence level means more reliable results. Use tools to calculate this. They help you understand if your A/B test results are trustworthy.

Compare the performance of the control and variant. Look at metrics like conversion rate, bounce rate, and click-through rate. This comparison helps you see which version performs better.

Key Metrics To Track

Tracking the right metrics is crucial for A/B testing success. Here are some key metrics to focus on:

- Conversion Rate: Measures the percentage of visitors completing a desired action. Higher conversion rates indicate better performance.

- Bounce Rate: Percentage of visitors who leave your site after viewing only one page. Lower bounce rates suggest more engaging content.

- Click-Through Rate (CTR): Percentage of visitors who click on a specific link. High CTR means your content is compelling.

- Average Session Duration: The amount of time users spend on your site. Longer sessions indicate higher engagement.

These metrics provide insights into user behavior. They help you understand which elements of your site need improvement.

Use a table to track these metrics effectively:

| Metric | Control | Variant |

|---|---|---|

| Conversion Rate | 5% | 7% |

| Bounce Rate | 50% | 45% |

| CTR | 10% | 12% |

| Average Session Duration | 2 minutes | 3 minutes |

Regularly analyze these metrics. They guide your optimization efforts. Focus on the metrics that align with your goals. This ensures your A/B tests are effective.

Common Pitfalls In A/b Testing

Implementing A/B testing tools can significantly boost your website’s performance. Yet, many users fall into common pitfalls that can skew results. Understanding and avoiding these pitfalls is crucial for reliable outcomes.

Avoiding Bias

Bias can creep into A/B testing in various ways. Ensuring a fair test is essential.

- Selection Bias: Make sure your test groups are random. Non-random groups can lead to misleading results.

- Timing Bias: Run tests at different times to avoid time-based biases. User behavior may vary throughout the day or week.

- Device Bias: Ensure tests run across all devices. Different devices can show varied user behaviors.

Ensuring Statistical Significance

Statistical significance ensures your test results are reliable and not due to chance.

- Determine the required sample size before starting the test. A too-small sample size can lead to unreliable results.

- Run the test for an adequate duration. Short tests may not capture all user behaviors.

- Use appropriate statistical methods to analyze results. Incorrect analysis can lead to false conclusions.

Let’s look at an example of sample size calculation:

# Sample Size Calculation in Python

import math

def sample_size(p1, p2, alpha=0.05, power=0.8):

z_alpha = abs(norm.ppf(alpha / 2))

z_beta = abs(norm.ppf(power))

p_avg = (p1 + p2) / 2

return math.ceil((2 p_avg (1 - p_avg) (z_alpha + z_beta) 2) / (p1 - p2) 2)

p1 = 0.2 # Control group conversion rate

p2 = 0.25 # Variant group conversion rate

print(sample_size(p1, p2))

Use this code to calculate the required sample size for your tests.

By understanding these common pitfalls, you can ensure more accurate and actionable A/B test results.

Case Studies Of Successful A/b Tests

A/B testing tools help businesses improve their websites and apps. They test different versions to see what works best. Below are some case studies showing how A/B tests brought success.

E-commerce Examples

Many e-commerce sites use A/B testing to boost sales. Here are two examples:

| Company | Tested Element | Result |

|---|---|---|

| Online Store A | Product Page Layout | 15% Increase in Sales |

| Retailer B | Checkout Process | 20% Reduction in Cart Abandonment |

Online Store A changed the layout of their product pages. They tested different images and text placements. The new layout increased sales by 15%.

Retailer B focused on the checkout process. They simplified the steps and added trust badges. This led to a 20% reduction in cart abandonment.

Saas Industry Examples

Software as a Service (SaaS) companies also benefit from A/B testing. Below are two successful cases:

| Company | Tested Element | Result |

|---|---|---|

| SaaS Company X | Pricing Page | 10% Increase in Conversions |

| Software Firm Y | Signup Form | 25% More Signups |

SaaS Company X optimized their pricing page. They tested different layouts and pricing options. This resulted in a 10% increase in conversions.

Software Firm Y focused on their signup form. They reduced the number of fields and added social proof. This led to a 25% increase in signups.

These case studies show the power of A/B testing. Small changes can lead to big results. Use these examples to inspire your own tests.

Best Practices For Continuous Improvement

Best Practices for Continuous Improvement in A/B testing involve a systematic approach to refining and enhancing your strategies. Adhering to these practices ensures that your testing not only yields accurate results but also drives meaningful insights for your business.

Iterative Testing

Iterative testing is a key practice in the A/B testing process. This involves running multiple rounds of tests and making small, incremental changes. Each iteration builds on the previous one, leading to continuous improvement.

- Start with a clear hypothesis.

- Run the test and collect data.

- Analyze results and make adjustments.

- Repeat the process with new variations.

By following this cycle, you ensure that each change is backed by data. This helps in refining your strategies over time.

Incorporating Insights

Incorporating insights from your A/B tests is crucial. Without this, the testing process loses its value. Here’s how to effectively integrate your findings:

- Document results: Keep detailed records of your tests and their outcomes.

- Identify patterns: Look for recurring themes in your data.

- Apply learnings: Use these patterns to inform future tests and strategies.

- Share knowledge: Communicate your findings with your team to foster a data-driven culture.

By incorporating insights, you ensure that your A/B testing efforts lead to actionable improvements. This practice enhances the overall effectiveness of your testing strategy.

| Practice | Benefit |

|---|---|

| Iterative Testing | Continuous enhancement of strategies |

| Incorporating Insights | Actionable improvements and informed decisions |

Implementing these best practices helps you make the most of your A/B testing tools. This leads to continuous improvement and better business outcomes.

Future Of A/b Testing Tools

The future of A/B testing tools is bright. These tools are evolving quickly. They are becoming more advanced and user-friendly. The focus is on making testing more effective and insightful.

Emerging Trends

Several emerging trends are shaping the future of A/B testing tools. One trend is the shift towards real-time data analysis. This allows for quicker decision-making. Another trend is the integration of these tools with other marketing platforms. This helps streamline workflows.

Additionally, there is a growing focus on personalization. A/B testing tools are now being designed to handle more personalized experiences. This means testing can be done on more specific audience segments. This results in more accurate and useful insights.

Impact Of Ai And Machine Learning

The impact of AI and Machine Learning on A/B testing tools is significant. These technologies are making testing smarter and more efficient. AI can analyze large amounts of data quickly. It can identify patterns that humans might miss. This leads to more accurate test results.

Machine Learning algorithms can also help in predicting outcomes. They can suggest the best variations to test. This saves time and resources. It makes the testing process more effective. The use of AI and Machine Learning is set to grow. It will continue to enhance the capabilities of A/B testing tools.

| Feature | Benefit |

|---|---|

| Real-Time Data Analysis | Quicker decision-making |

| Integration with Marketing Platforms | Streamlined workflows |

| Personalization | More accurate insights |

| AI and Machine Learning | Smarter testing |

In conclusion, the future of A/B testing tools is promising. With emerging trends and the impact of AI and Machine Learning, these tools will continue to evolve and improve.

Credit: www.aalpha.net

Frequently Asked Questions

What Is A/b Testing?

A/B testing is a method to compare two versions of a webpage or app. It helps determine which one performs better.

How Do A/b Testing Tools Work?

A/B testing tools split your audience into two groups. They show each group a different version to measure performance differences.

Why Use A/b Testing Tools?

A/B testing tools help improve user experience and conversion rates. They provide data-driven insights for better decision-making.

What Are The Benefits Of A/b Testing?

Benefits of A/B testing include increased conversions, better user engagement, and more effective marketing strategies. It helps optimize performance.

Conclusion

A/B testing tools are effective for improving your website’s performance. They provide clear insights. You can easily identify what works best. These tools help make data-driven decisions. Small changes can lead to big results. Testing can boost user engagement and conversions.

It’s essential for any digital strategy. Invest time in A/B testing. Achieve better outcomes and growth. Start testing today and see the difference.