A/B testing tools with analytics help improve your website’s performance. They compare two versions of a webpage to find out which one works better.

This process helps in understanding user behavior and making data-driven decisions. In today’s digital age, businesses need to optimize their online presence. A/B testing tools with analytics provide insights into what works and what doesn’t. By comparing different versions of a webpage, these tools reveal user preferences and behaviors.

This helps in making informed changes to improve conversion rates and user experience. These tools combine testing and analytics, offering a comprehensive approach to website optimization. Businesses can gain valuable data without guessing. This blog post will explore the importance of A/B testing tools with analytics. Let’s see how they can transform your website’s performance.

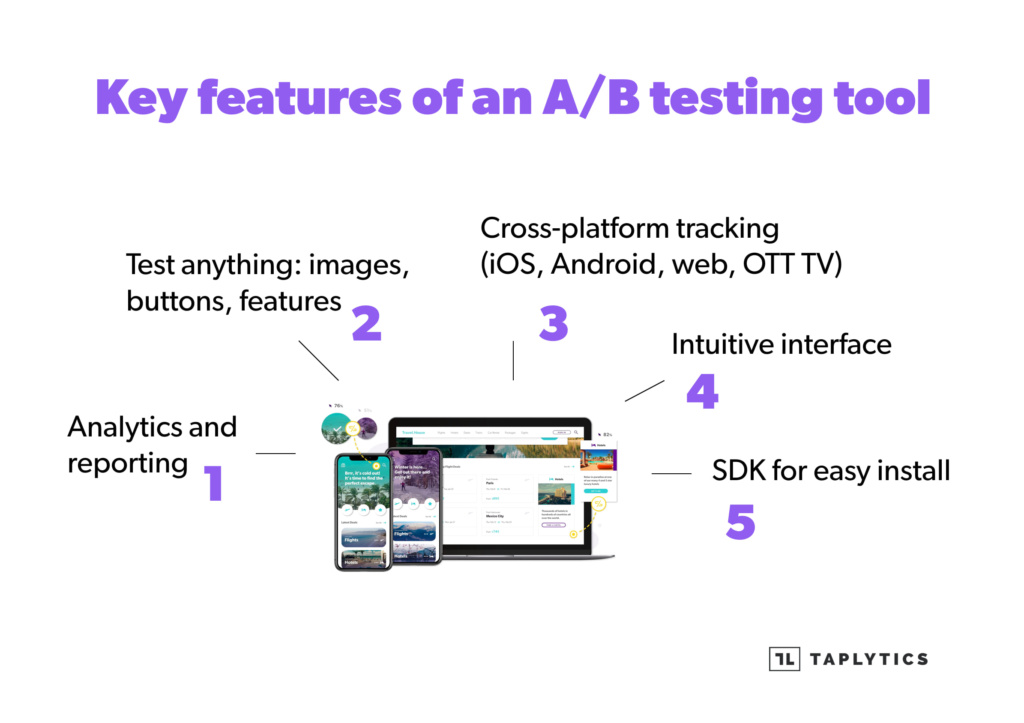

Credit: taplytics.com

Introduction To A/b Testing

A/B testing is a powerful method used to compare two versions of a web page or app against each other. The goal is to determine which version performs better. This type of testing helps businesses make informed decisions based on real data. It is essential for improving user experience and increasing conversions.

Importance Of A/b Testing

A/B testing plays a crucial role in digital marketing. It helps businesses understand what works best for their audience. Testing different elements, such as headlines, images, and calls to action, can lead to significant improvements. Here are some key benefits:

- Increased conversion rates

- Better user experience

- Data-driven decisions

- Reduced bounce rates

How A/b Testing Works

A/B testing involves creating two versions of a web page: Version A (the control) and Version B (the variation). Each version is shown to a different group of users. Analytics tools then measure the performance of each version. The key steps include:

- Identify goals: Determine what you want to achieve, such as higher clicks or sales.

- Create variations: Make a new version of your web page with changes.

- Split traffic: Divide your audience into two groups. Show each group one version.

- Collect data: Use analytics tools to track user behavior and outcomes.

- Analyze results: Compare the performance of both versions. Identify the winner.

The results from A/B testing provide valuable insights. They help you make improvements based on real user data. This method is a reliable way to enhance your website or app and achieve better outcomes.

Choosing The Right A/b Testing Tools

Choosing the right A/B testing tools can be a daunting task. The right tool can help optimize your website or app. It’s crucial to find a tool that suits your needs. This section will help you make an informed decision.

Factors To Consider

Several factors play a role in choosing the right A/B testing tool. Here are a few key considerations:

- Ease of Use: The tool should be user-friendly.

- Integration: Ensure it integrates well with your existing systems.

- Cost: Consider your budget and the tool’s pricing model.

- Support: Good customer support can save time and hassle.

- Features: Look for features like segmentation, targeting, and multivariate testing.

Popular A/b Testing Tools

Here are some popular A/B testing tools that many businesses use:

| Tool | Key Features | Price Range |

|---|---|---|

| Optimizely | Easy to use, robust analytics, multiple integrations | $$$ |

| VWO | Heatmaps, visual editor, A/B and multivariate testing | $$ |

| Google Optimize | Free, integrates with Google Analytics, user-friendly | $ |

| AB Tasty | Personalization, audience targeting, collaborative tools | $$ |

Each of these tools has its own set of advantages. For instance, Optimizely is known for its robustness, while Google Optimize is appreciated for being cost-effective. Your choice will depend on your specific needs and budget.

Setting Up Your A/b Test

Setting up your A/B test is a crucial step in understanding user behavior. It helps you make data-driven decisions. To start, you need to define your goals and create variations. Let’s dive into each step.

Defining Goals

Defining clear goals is the first step in setting up your A/B test. A well-defined goal helps you measure success accurately. Here are some common goals:

- Increase in click-through rate (CTR)

- Higher conversion rate

- Reduced bounce rate

- Improved user engagement

Use specific and measurable goals. For example, aim to increase the CTR by 15%. This will make it easier to track your progress.

Creating Variations

Creating variations involves designing different versions of your web page or element. These variations will be tested against each other. Here are steps to create variations:

- Identify the element you want to test (e.g., headline, button color).

- Create Version A (the control) and Version B (the variation).

- Ensure the variations are significantly different to impact user behavior.

For example, if you are testing a headline, Version A might read “Buy Now” and Version B “Shop Today”. This clear difference helps you understand which version performs better.

In summary, setting up your A/B test with clear goals and distinct variations is key. It allows you to gather meaningful data for better decision-making.

Running Your A/b Test

Running your A/B test effectively requires careful planning and execution. This ensures you gather the most accurate data and insights. Let’s delve into two critical aspects of running your A/B test: Traffic Distribution and Test Duration.

Traffic Distribution

Proper traffic distribution is crucial for an accurate A/B test. You need to split your audience evenly between different versions of your test. This ensures that results are not biased. Here are some steps:

- Determine the sample size needed for statistical significance.

- Use a tool to randomly assign users to either version A or B.

- Ensure the distribution remains consistent throughout the test.

Using tools like Google Analytics or Optimizely can help manage traffic distribution. These tools automate the process and reduce manual errors. Below is a table comparing some popular tools:

| Tool | Feature | Ease of Use |

|---|---|---|

| Google Analytics | Free, Comprehensive Data | Moderate |

| Optimizely | Advanced Targeting | Easy |

| VWO | Heatmaps, Session Recording | Easy |

Test Duration

Determining the right test duration is essential for gathering reliable data. Running a test for too short a time can lead to inaccurate results.

Here are some factors to consider:

- Sample size: A larger sample size requires a longer test duration.

- Seasonality: Consider any external factors that might affect user behavior.

- Business cycles: Align your test duration with your business cycles for more accurate data.

Many A/B testing tools offer built-in calculators to help determine the optimal test duration. Utilize these features to ensure your test runs long enough to produce reliable results.

Analyzing A/b Test Results

Analyzing A/B test results is crucial for making data-driven decisions. Understanding the data helps you see what changes work best. This section will guide you through the process of interpreting data and determining statistical significance.

Interpreting Data

Interpreting data from A/B tests involves looking at different metrics. These metrics include conversion rates, click-through rates, and bounce rates. By comparing these metrics, you can see which version performs better.

Here is a simple table to illustrate how to compare metrics:

| Metric | Version A | Version B |

|---|---|---|

| Conversion Rate | 5% | 7% |

| Click-Through Rate | 10% | 12% |

| Bounce Rate | 40% | 35% |

From the table, you can see that Version B has better conversion and click-through rates. It also has a lower bounce rate, indicating it performs better overall.

Statistical Significance

Statistical significance is important to ensure results are not due to chance. You need to calculate the p-value to determine this.

Follow these steps to calculate statistical significance:

- Collect data for both versions.

- Use a statistical tool to calculate the p-value.

- If the p-value is less than 0.05, results are statistically significant.

Statistical significance means the results are reliable. You can confidently make decisions based on these results.

In summary, effective A/B testing requires proper data interpretation and understanding statistical significance. Use these insights to improve your website’s performance.

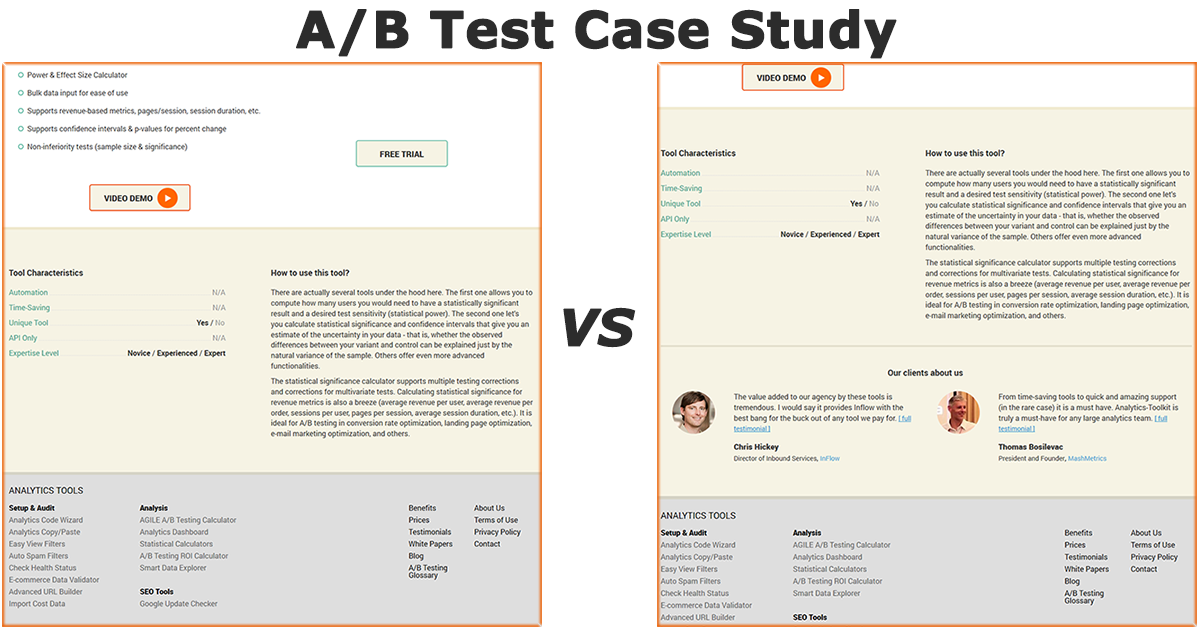

Credit: blog.analytics-toolkit.com

Integrating Analytics

Integrating analytics into your A/B testing tools is crucial. It helps you understand user behavior and measure conversion rates accurately. This detailed insight allows you to make data-driven decisions. Let’s explore how to track user behavior and measure conversion rates using analytics.

Tracking User Behavior

Tracking user behavior is essential for A/B testing. It helps you see how users interact with different versions of your site. Key metrics to track include:

- Page Views: Number of times a page is viewed.

- Bounce Rate: Percentage of visitors who leave after viewing one page.

- Session Duration: Average time users spend on your site.

- Click-Through Rate: Percentage of users who click on a specific link.

Use tools like Google Analytics to collect and analyze this data. These tools provide detailed reports and visualizations. This helps identify patterns and areas for improvement.

Measuring Conversion Rates

Measuring conversion rates is vital for assessing the success of your A/B tests. A conversion occurs when a user completes a desired action. Common conversion goals include:

- Making a purchase.

- Signing up for a newsletter.

- Filling out a contact form.

To measure conversion rates, follow these steps:

- Set up conversion tracking in your analytics tool.

- Define your conversion goals.

- Analyze the data to determine which version performs better.

Most A/B testing tools offer built-in analytics features. These features simplify the process of measuring and comparing conversion rates. By integrating analytics, you can make informed decisions. This leads to improved user experience and increased conversions.

Best Practices For A/b Testing

A/B testing is essential for optimizing your website or app. Proper methods ensure you get accurate results and make better decisions. Here are some best practices to follow for successful A/B testing.

Avoiding Common Mistakes

Avoid common mistakes to get reliable results. Here are a few tips:

- Test one variable at a time: Changing multiple variables can confuse results.

- Run tests long enough: Short tests may not show true user behavior.

- Segment your audience: Different user groups may react differently.

- Use a large sample size: Small samples can lead to inaccurate conclusions.

Continuous Testing

Continuous testing is key to ongoing improvement. Always look for new areas to test and optimize.

Here’s a simple plan to follow:

- Identify areas to improve.

- Create hypotheses for changes.

- Run A/B tests to validate hypotheses.

- Implement winning variations.

- Repeat the process.

By following these steps, you ensure your site or app stays optimized.

Remember, successful A/B testing involves avoiding common mistakes and continuous testing. Stay focused and keep experimenting for the best results.

Case Studies

Case studies are powerful tools that highlight the effectiveness of A/B testing tools with analytics. They show real-world applications and results. Let’s explore a few examples to better understand the impact of A/B testing tools.

Successful A/b Testing Examples

Many companies have used A/B testing to improve their websites. Below are some notable examples:

| Company | Objective | Result |

|---|---|---|

| Airbnb | Optimize search results | Increased bookings by 5% |

| Improve ad performance | Boosted ad clicks by 15% | |

| Amazon | Enhance checkout process | Reduced cart abandonment by 10% |

Lessons Learned

Key lessons from these case studies are:

- Test one variable at a time: This helps identify what truly makes a difference.

- Use clear metrics: Define what success looks like before starting.

- Be patient: Significant changes might take time to show results.

These lessons help in understanding how to effectively use A/B testing tools.

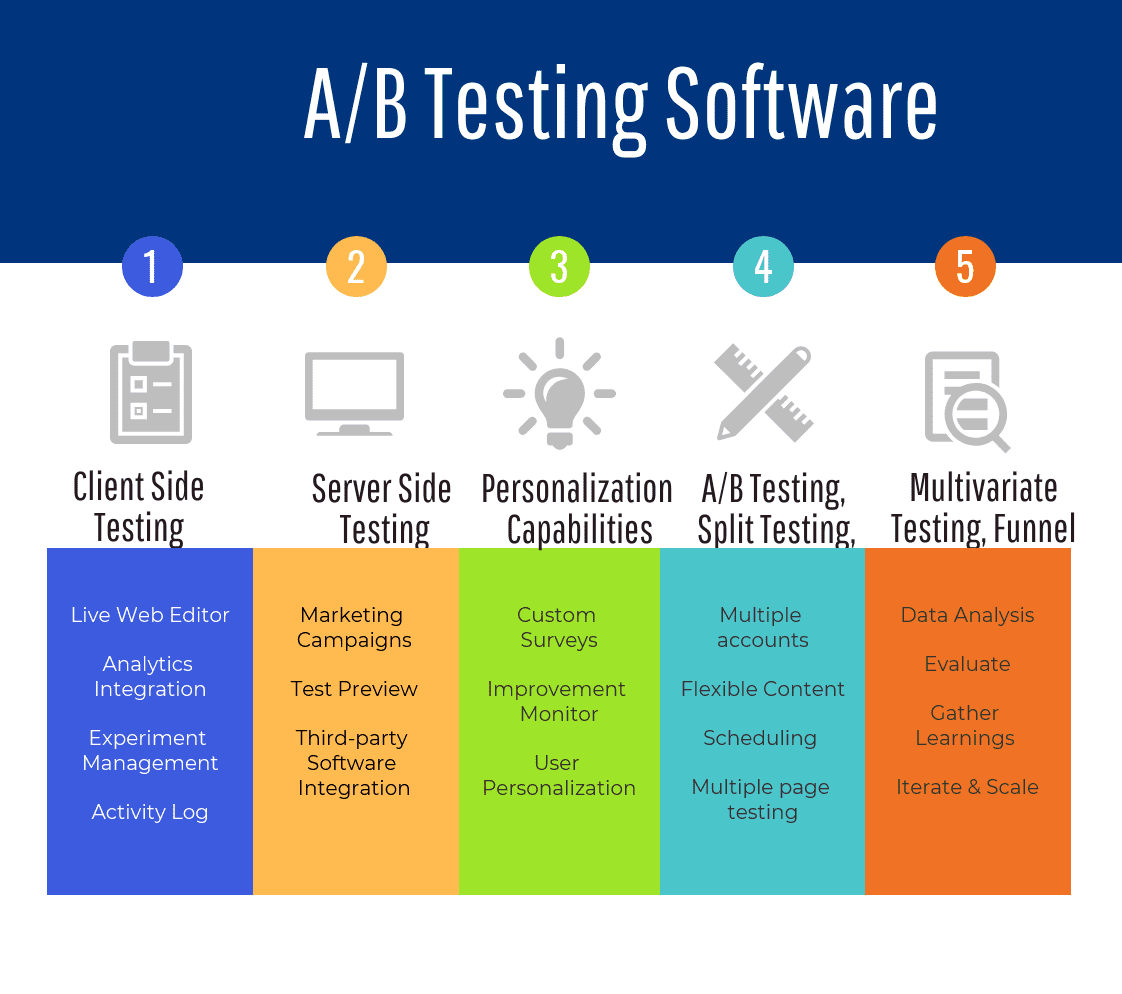

Future Trends In A/b Testing

A/B testing tools have evolved significantly over the past few years. The future holds even more exciting advancements. Companies are continuously seeking ways to optimize their campaigns. They aim to boost conversion rates and improve user experiences. Let’s dive into some future trends in A/B testing that are shaping the landscape.

Ai And Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) are transforming A/B testing. These technologies help to analyze large sets of data quickly. AI can identify patterns and predict outcomes more accurately than traditional methods.

Here are some benefits of AI and ML in A/B testing:

- Faster Analysis: AI processes data much faster than humans.

- Accurate Predictions: ML algorithms predict user behavior with high accuracy.

- Automated Testing: AI can automate the A/B testing process, saving time and resources.

This integration means businesses can make data-driven decisions more efficiently. It also allows for continuous optimization of their strategies.

Personalization

Personalization is a growing trend in A/B testing. Personalized experiences are key to improving user engagement and satisfaction. By tailoring content and offers, companies can better meet user needs.

Personalization in A/B testing can be achieved through:

- Segmented Testing: Testing different variations for different user segments.

- Dynamic Content: Adjusting content based on user behavior and preferences.

- User Profiles: Creating detailed user profiles to guide testing strategies.

By leveraging personalization, companies can create more relevant and effective campaigns. This not only increases conversion rates but also enhances the overall user experience.

| Trend | Benefit |

|---|---|

| AI and Machine Learning | Faster and more accurate data analysis |

| Personalization | Improved user engagement and satisfaction |

These future trends in A/B testing are set to revolutionize how companies approach optimization. By embracing these innovations, businesses can stay ahead of the curve and achieve better results.

Credit: www.predictiveanalyticstoday.com

Frequently Asked Questions

What Are A/b Testing Tools?

A/B testing tools help compare two versions of a webpage to determine which performs better. They provide analytics to measure user engagement and conversion rates.

Why Use Analytics In A/b Testing?

Analytics in A/B testing helps track user behavior and measure the effectiveness of changes. It ensures data-driven decisions for optimization.

Which Are Popular A/b Testing Tools?

Popular A/B testing tools include Optimizely, VWO, and Google Optimize. These tools offer robust features and detailed analytics.

How Does A/b Testing Improve Website Performance?

A/B testing identifies what works best for users. It improves website performance by increasing conversions, engagement, and overall user experience.

Conclusion

Choosing the right A/B testing tools can improve your website’s performance. These tools, combined with strong analytics, offer valuable insights. Understand your audience better, and make data-driven decisions. Enhance user experience and increase conversions. Always test and analyze results to optimize strategies.

Stay ahead with continuous improvement and learning. Happy testing!